Ev ve Ofis taşıma sektöründe lider olmak.Teknolojiyi takip ederek bunu müşteri menuniyeti amacı için kullanmak.Sektörde marka olmak.

İstanbul evden eve nakliyat

Misyonumuz sayesinde edindiğimiz müşteri memnuniyeti ve güven ile müşterilerimizin bizi tavsiye etmelerini sağlamak.

Dynamic Bayesian Networks for Vehicle Classification in Video

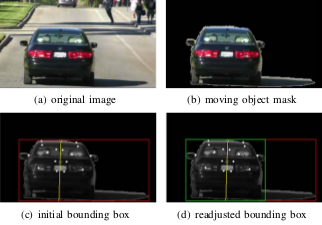

Vehicle classification has evolved into a significant subject of study due to its importance in autonomous

navigation, traffic analysis, surveillance and security systems, and transportation management.

We present a

system which classifies a vehicle (given its direct rear-side view) into one of

four classes Sedan, Pickup truck, SUV/Minivan, and unknown. A feature set of tail light and vehicle

dimensions is extracted which feeds a feature selection algorithm.

A feature vector is then processed by a Hybrid Dynamic Bayesian Network (HDBN) to

classify each vehicle.

Vehicle classification has evolved into a significant subject of study due to its importance in autonomous

navigation, traffic analysis, surveillance and security systems, and transportation management.

We present a

system which classifies a vehicle (given its direct rear-side view) into one of

four classes Sedan, Pickup truck, SUV/Minivan, and unknown. A feature set of tail light and vehicle

dimensions is extracted which feeds a feature selection algorithm.

A feature vector is then processed by a Hybrid Dynamic Bayesian Network (HDBN) to

classify each vehicle.

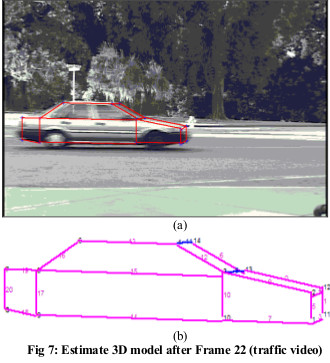

Unsupervised Learning for Incremental 3-D Modeling

Learning based incremental 3D modeling of traffic

vehicles from uncalibrated video data stream has enormous

application potential in traffic monitoring and intelligent

transportation systems. In our research video data from a

traffic surveillance camera is used to incrementally

develop the 3D model of vehicles using a clustering based

unsupervised learning. Geometrical relations based on 3D

generic vehicle model map 2D features to 3D. The 3D

features are then adaptively clustered over the frames to

incrementally generate the 3D model of the vehicle.

Results are shown for both simulated and real traffic video.

They are evaluated by a structural performance measure.

Learning based incremental 3D modeling of traffic

vehicles from uncalibrated video data stream has enormous

application potential in traffic monitoring and intelligent

transportation systems. In our research video data from a

traffic surveillance camera is used to incrementally

develop the 3D model of vehicles using a clustering based

unsupervised learning. Geometrical relations based on 3D

generic vehicle model map 2D features to 3D. The 3D

features are then adaptively clustered over the frames to

incrementally generate the 3D model of the vehicle.

Results are shown for both simulated and real traffic video.

They are evaluated by a structural performance measure.

Continuous learning of a multi-layered network topology in a video camera network

.png) A multilayered camera network architecture with nodes as entry/exit points, cameras, and clusters of cameras at different layers is proposed. This paper integrates face recognition that provides robustness to appearance changes and better models the time-varying traffic patterns in the network. The statistical dependence between the nodes, indicating the connectivity and traffic patterns of the camera network, is represented by a weighted directed graph and transition times that may have multimodal distributions. The traffic patterns and the network topology may be changing in the dynamic environment. We propose a Monte Carlo Expectation-Maximization algorithm-based continuous learning mechanism to capture the latent dynamically changing characteristics of the network topology. In the experiments, a nine-camera network with twenty-five nodes (at the lowest level) is analyzed both in simulation and in real-life experiments and compared with previous approaches.

A multilayered camera network architecture with nodes as entry/exit points, cameras, and clusters of cameras at different layers is proposed. This paper integrates face recognition that provides robustness to appearance changes and better models the time-varying traffic patterns in the network. The statistical dependence between the nodes, indicating the connectivity and traffic patterns of the camera network, is represented by a weighted directed graph and transition times that may have multimodal distributions. The traffic patterns and the network topology may be changing in the dynamic environment. We propose a Monte Carlo Expectation-Maximization algorithm-based continuous learning mechanism to capture the latent dynamically changing characteristics of the network topology. In the experiments, a nine-camera network with twenty-five nodes (at the lowest level) is analyzed both in simulation and in real-life experiments and compared with previous approaches.

Continuously Evolvable Bayesian Nets For Human Action Analysis in Videos

.png) This paper proposes a novel data driven continuously evolvable Bayesian Net (BN) framework to analyze human actions in video. In unpredictable video streams, only a few generic causal relations and their interrelations together with the dynamic changes of these interrelations are used to probabilistically estimate relatively complex human activities. Based on the available evidences in streaming videos, the proposed BN can dynamically change the number of nodes in every frame and different relations for the same nodes in different frames. The performance of the proposed BN framework is shown for complex movie clips where actions like hand on head or waist, standing close, and holding hands take place among multiple individuals under changing pose conditions. The proposed BN can represent and recognize the human activities in a scalable manner.

This paper proposes a novel data driven continuously evolvable Bayesian Net (BN) framework to analyze human actions in video. In unpredictable video streams, only a few generic causal relations and their interrelations together with the dynamic changes of these interrelations are used to probabilistically estimate relatively complex human activities. Based on the available evidences in streaming videos, the proposed BN can dynamically change the number of nodes in every frame and different relations for the same nodes in different frames. The performance of the proposed BN framework is shown for complex movie clips where actions like hand on head or waist, standing close, and holding hands take place among multiple individuals under changing pose conditions. The proposed BN can represent and recognize the human activities in a scalable manner.

How Current BNs Fail to Represent Evolvable Pattern Recognition Problems and a Proposed Solution

.png) In the real world, systems/processes often evolve without fixed and predictable dynamic models. To represent such applications we need uncertainty models, like Bayesian Nets (BN) that are formed online and in a self-evolving data-driven way. But current BN frameworks cannot handle simultaneous scalability in the model structure and causal relations. We show how current BNs fail in different applications from several fields, ranging from computer vision to database retrieval to medical diagnostics. We propose a novel Structure Modifiable Adaptive Reason-building Temporal Bayesian Networks (SmartBN) that has scalability for uncertainty in both, structures and causal relations. We evaluate its performance for a 3D model building application for vehicles in traffic video.

In the real world, systems/processes often evolve without fixed and predictable dynamic models. To represent such applications we need uncertainty models, like Bayesian Nets (BN) that are formed online and in a self-evolving data-driven way. But current BN frameworks cannot handle simultaneous scalability in the model structure and causal relations. We show how current BNs fail in different applications from several fields, ranging from computer vision to database retrieval to medical diagnostics. We propose a novel Structure Modifiable Adaptive Reason-building Temporal Bayesian Networks (SmartBN) that has scalability for uncertainty in both, structures and causal relations. We evaluate its performance for a 3D model building application for vehicles in traffic video.

Integrating Relevance Feedback Techniques for Image Retrieval

We introduce an image relevance

reinforcement learning (IRRL) model for integrating existing RF techniques in a content-based image

retrieval system. Various integration schemes are presented and a long-term shared memory is used to

exploit the retrieval experience from multiple users. The experimental results manifest that the integration of

multiple RF

approaches gives better retrieval performance than using one RF technique alone. Further, the

storage demand is significantly reduced by the concept digesting technique.

We introduce an image relevance

reinforcement learning (IRRL) model for integrating existing RF techniques in a content-based image

retrieval system. Various integration schemes are presented and a long-term shared memory is used to

exploit the retrieval experience from multiple users. The experimental results manifest that the integration of

multiple RF

approaches gives better retrieval performance than using one RF technique alone. Further, the

storage demand is significantly reduced by the concept digesting technique.

Feature relevance estimation for image databases

.png) Content-based image retrieval methods based on the Euclidean metric expect the feature space to be isotropic. They suffer from unequal differential relevance of features in computing the similarity between images in the input feature space. We propose a learning method that attempts to overcome this limitation by capturing local differential relevance of features based on user feedback. This feedback, in the form of accept or reject examples generated in response to a query image, is used to locally estimate the strength of features along each dimension. This results in local neighborhoods that are constricted along feature dimensions that are most relevant, while enlongated along less relevant ones. We provide experimental results that demonstrate the efficacy of our technique using real-world data.

Content-based image retrieval methods based on the Euclidean metric expect the feature space to be isotropic. They suffer from unequal differential relevance of features in computing the similarity between images in the input feature space. We propose a learning method that attempts to overcome this limitation by capturing local differential relevance of features based on user feedback. This feedback, in the form of accept or reject examples generated in response to a query image, is used to locally estimate the strength of features along each dimension. This results in local neighborhoods that are constricted along feature dimensions that are most relevant, while enlongated along less relevant ones. We provide experimental results that demonstrate the efficacy of our technique using real-world data.

Probabilistic Feature Relevance Learning for Content-Based Image Retrieval

Most of the current image retrieval systems use "one-shot" queries to a database to retrieve similar images. Typically a K-nearest neighbor kind of algorithm is used, where

weights measuring feature importance along each input dimension remain fixed (or manually tweaked by the user), in the computation of a given similarity metric. In this

paper, we present a novel probabilistic method that enables image retrieval procedures to automatically

capture feature relevance based on user's feedback and that is highly adaptive to query locations. Experimental

results are presented that demonstrate the efficacy of our technique using both simulated and real-world data.

Most of the current image retrieval systems use "one-shot" queries to a database to retrieve similar images. Typically a K-nearest neighbor kind of algorithm is used, where

weights measuring feature importance along each input dimension remain fixed (or manually tweaked by the user), in the computation of a given similarity metric. In this

paper, we present a novel probabilistic method that enables image retrieval procedures to automatically

capture feature relevance based on user's feedback and that is highly adaptive to query locations. Experimental

results are presented that demonstrate the efficacy of our technique using both simulated and real-world data.

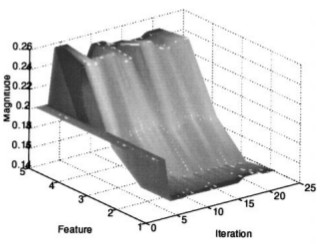

Probabilistic feature relevance learning for online indexing

.png) In this paper, we present a novel probabilistic method that enables image retrieval procedures to automatically capture feature relevance based on user’s feedback and that is highly adaptive to query locations. The method estimates the strength of each feature for predicting the query using feedback provided by the user, thereby capturing relevance measures for online indexing. Experimental results are presented that demonstrate the efficacy of our technique using both simulated and real-world data.

In this paper, we present a novel probabilistic method that enables image retrieval procedures to automatically capture feature relevance based on user’s feedback and that is highly adaptive to query locations. The method estimates the strength of each feature for predicting the query using feedback provided by the user, thereby capturing relevance measures for online indexing. Experimental results are presented that demonstrate the efficacy of our technique using both simulated and real-world data.

|